A couple of days ago, I helped a friend with her master's thesis. Her supervisor required her to re-implement and validate the modeling for some of the studies cited in her thesis using code. This kind of request is quite common in research, but she was completely stuck at this step because she had no coding experience at all.

She had already struggled for half a day, trying various domestic AI tools, but the code either wouldn't run or the output was completely off the mark. In the end, she had no choice but to ask me for help.

By the time she reached out to me, she was already a bit distraught. I recommended she try ChatGPT again and optimize her prompts, suggesting she give it another round. It still didn't work.

So, I asked her to send me the materials. Upon opening it, I saw it was a PDF. At this point, I had a basic hunch: the problem likely wasn't the model's capability, but the input format. I then dropped the file into Antigravity and ran it through the Claude model inside. The model quickly provided a rough modeling plan, but also noted that the current environment couldn't directly parse the PDF content. So, it was actually searching for information online based on the title and generating suggestions by combining common research methods. In other words, it hadn't actually read the paper.

So, the next thing I did was very simple: I used PDFgear to export the PDF as images and re-input them to the model. After running the code a few times and fixing the bugs that surfaced, the mathematical model ran smoothly. If you exclude my intermittent griping and her emotional breakdown on the other end of the internet connection, the whole process took about half an hour.

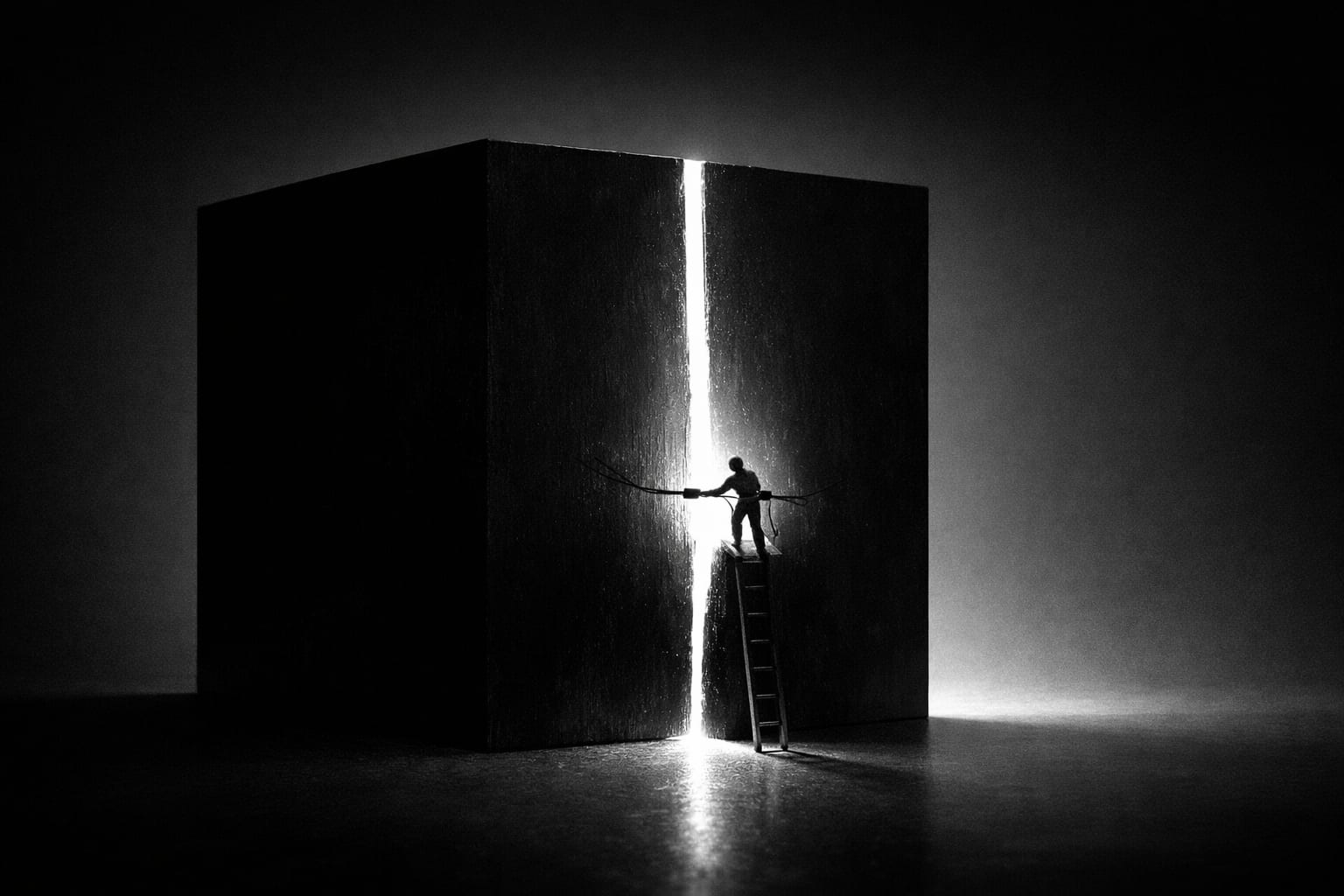

This incident gave me a strong feeling about a saying: The AI era is the best of times, and also the worst of times.

From an efficiency perspective, this is indeed a very good era.

If we rewind a few years, completing mathematical modeling often required reading the paper yourself, understanding the research methods, looking up information, writing code, and repeatedly tuning parameters. Even for someone proficient, it could take a full day. The current process is much simpler: let the model handle understanding the paper structure and generating the code framework, while the person only needs to do some checking and debugging. Many tasks that in the past only professional researchers could complete can now be done by ordinary people with the help of tools. As long as you know how to ask questions, verify results, and fix a bit of code, you can break down complex tasks and solve them step by step.

At the same time, this is also a somewhat frustrating era.

My friend was stuck simply because of the "incorrect input format" issue. The model didn't read the paper content, fabricated a bunch of nonsense, and naturally the whole process couldn't proceed. Before she found me, she had already spent a lot of time on various "magical" AI tools, but none of them clearly pointed out the real problem. Only Claude noted that the current environment couldn't parse the PDF. Actually, just inserting a simple step—asking the user to convert the PDF to images before input, or directly generating a PDF parser—would solve the problem. But this step wasn't proactively explained by most tools.

This incident makes me increasingly feel that the real gap between top-tier models may not necessarily lie in intelligence itself. People like to discuss model parameters, leaderboards, benchmarks, as if whoever is "smarter" will bring a completely different experience. But in real-world use, the experience gap often appears in engineering details.

Whether a system can recognize input format issues, explain the limitations of its current reasoning, or provide actionable suggestions for fixes—these details are crucial for users. Often, users don't need more complex reasoning capabilities; they just need a clear prompt: your input might be problematic.

Recently, many people like to talk about AI Agents, imagining a system that can automatically understand requirements, call tools, and complete complex tasks. It sounds wonderful, but in my view, reality is still far from that stage.

In this small case, the entire process still involved a person first judging where the problem might lie, then choosing tools, adjusting the input format, verifying the model's results, and finally fixing the code. AI did make many steps faster, but the control of the process still remained in human hands. In the short term, this is unlikely to change fundamentally.

What's even more interesting is that the truly important abilities in the AI era haven't actually changed. It's still about understanding the problem, breaking it down, judging the capabilities and limitations of tools, and combining different tools to solve problems.

Many early internet users are actually quite familiar with this way of thinking. In the days when internet speeds were only tens of KB, if you wanted to find a picture, you might have to scour forums, FTPs, and various resource sites. Back then, everyone naturally realized that tools had limitations, so you had to constantly try different methods and piece various tools together.

But today's internet environment is completely different. The vast majority of Gen Z have grown up with recommendation algorithms and streaming platforms, where content is automatically pushed to them, and they only need to click to play. All tools are perfectly packaged, ready to use out of the box. Over time, people's understanding of tools has actually diminished.

Tools have become more powerful, but many people are increasingly unfamiliar with their boundaries. When they encounter a problem not covered by mainstream tools, they often have no choice but to get frustrated or give up.

So, I'm increasingly convinced that the real gap between people in the future might not be about who has AI or not, but about who understands tools better. There will be more and more people who can use a single tool, but there will still be very few who can combine multiple tools to solve problems.

In the AI era, this ability will become even more important. After all, AI itself is just one of the tools, and tools never solve problems by themselves. What truly solves problems is always the person.